Robots Turning

How do we prevent robots from turning on their human masters?

How do we prevent robots from turning on their human masters?

Science fiction features robots with artificial intelligence (AI), capable of thinking and acting independently and turning on their creators. Stephen Hawking and other scientific prophets have worried about AI surpassing human intelligence, becoming reproductive and enslaving its former masters.

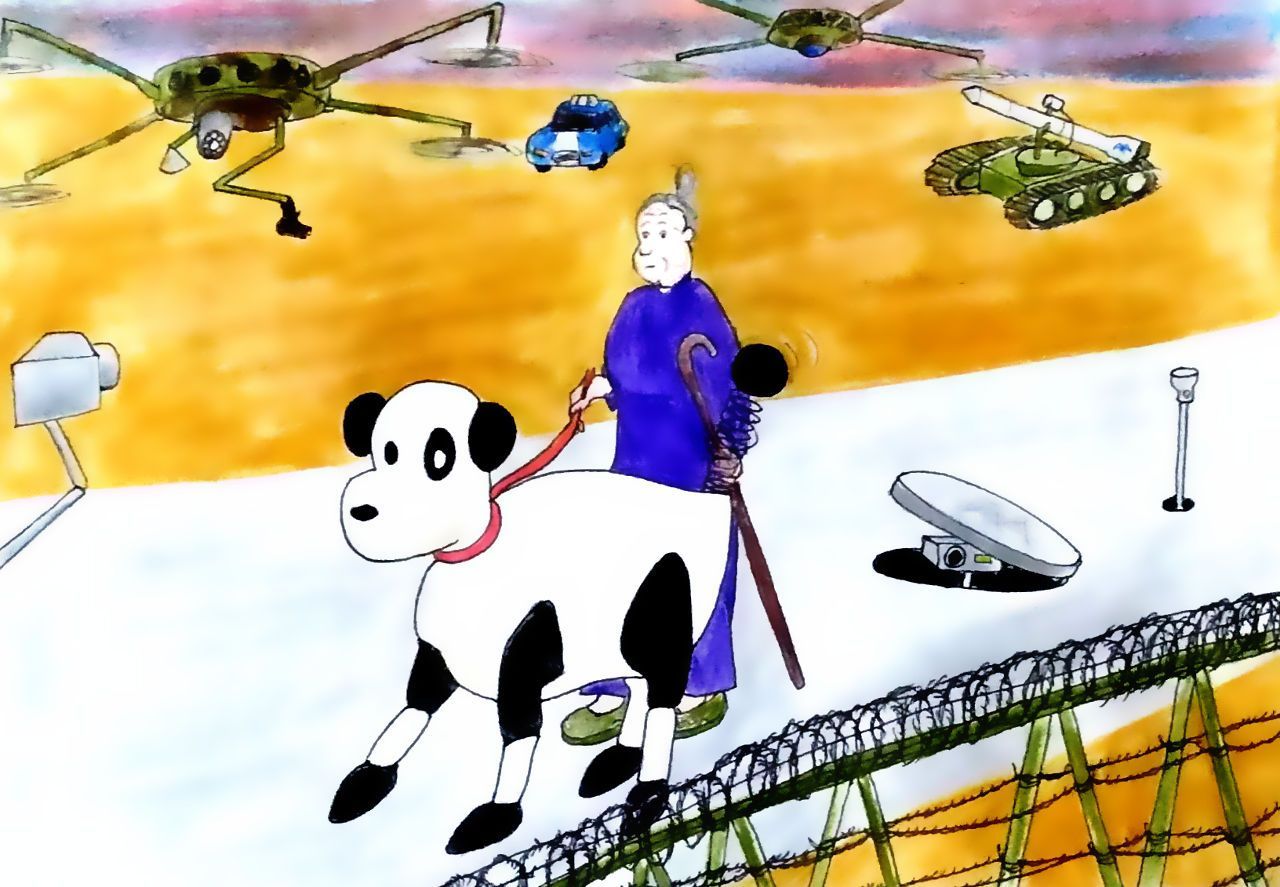

There is a presumption that robots and AI will be dispassionate and mechanical when it comes to dealing with their human neighbours on the planet; or worse, copy evil human traits and become cold-blooded killers. What adds fuel to these fears is that the military in several advanced nations are well ahead of civilian AI research through developing killer robots and drones for the battlefield.

Will this result in the military setting the direction of future civilian AI research, which seeks to spin off what the military has achieved? If this were to happen, and if AI becomes conscious, then the situation is grim indeed. We’d be superseded by an intelligence which has no morality, conscience or empathy.

How can this fate be avoided? How can the robots and AI of the future be designed with heart as well as mind? Capable of being our friend and not our enemy?

A biological precedent exists: the domestic dog.

Selectively bred from wolves in the wild over countless generations, dogs have become “Man’s best friend”. If trained correctly they are worshipful, obedient, loyal, loving, forgiving, contrite and helpful — just the qualities we would seek in our future smart robots, and the same as exhibited by R2D2 from “Star Wars”.

Even if robots become more intelligent than you, you’ll have nothing to fear — provided they have the personality and emotional attachment of your pet French poodle.

That begs the question: what is at the root of your pet poodle’s benign traits? After all, its DNA originated from that of the wolf — a calculating and vigorous hunter and killer. However, over multiple generations of selective breeding, humans have selected-out offspring with the violent traits and selected-in (for further breeding) those with the human-friendly and trainable traits. Over ten thousand years or more, this has produced your pet poodle.

What then underlies these good poodle traits? It is not so much intellect, but emotion, which in turn is linked to needs, and the drives to satisfy those needs. So, at the basic level, if the puppy’s body is sending a signal that it is hungry, then it needs to be fed, and the human master is the one who provides the food and satisfies that need.

It was biologist Ivan Pavlov who presented an experimental dog with meat, while measuring the saliva secreted from its mouth. After a number of trials, the dog salivated whenever it heard the footsteps of the assistant usually bringing the meat, even if no meat were presented. This association of one thing to another is called “classical conditioning”, an important discovery in the psychology of learning.

So in a Pavlovian sense, the poodle pup is conditioned to feel pleasure whenever it sees its human master.

The sense of pleasure, felt by a pup on seeing its human provider, ultimately becomes love at a higher level.

In the same way, all the good traits of the pup are derivatives of some basic biological or psychological need which is satisfied by the human master. These include the need for warmth, for safety from harm and, and for belonging. The need to hunt and to compete with other species for survival, so necessary for a wolf in the wild, which has a violent edge to it, did not have to be selected-in during domestic breeding of the poodle, as humans provided the food and protection that such behaviour was meant to deliver.

Analogously, since our ideal robot does not have to go through the evolutionary process of survival of the fittest, then it also does not need to be hardwired with the drive to kill and to compete with others.

So, if these basic needs of dogs have been integral to their selective breeding and training, then how do we design them into a robot’s electrical circuit?

The equivalent responses in a puppy are emotional as well as cerebral; and emotions spring partly from the body which is linked to the environment through signals sent by its sense organs. So, our empathetic robot is going to need a body as well as a brain; or, if not an actual body, a generator of signals which simulate those of a body.

However, it may be possible to take a short cut here. Since our empathetic robot does not go through the process of evolution, it does not need the basic drives like seeking food. What’s more, we won’t have to leverage off those drives, as with the pup, to condition the robot to be human-friendly during training. If future humans are smart enough to make a robot conscious, then they should be able to hardwire human-friendliness directly into it. From medical interventions in humans, neurosurgeons know at least as much about how the emotions work as they know how the mind functions.

But who is going to design and build such robots? Certainly not the military. They want robots that will obey orders without question. They don’t want them screwed up with emotions and empathy. They don’t want them with a conscience. They want cold-blooded killers. If these develop minds of their own, then humanity will truly be in trouble.

Similarly with the tech companies. They want self-driving cars which obey all the rules of the road and can execute manoeuvrers with precision to avoid accidents. They would see no commercial advantage (and some operational disadvantages) in producing a self-driving car with emotions. They might program in decision pathways for ethical issues, but these would be purely mechanical.

The best chance of producing smart robots with heart is in the service industries, where empathy and kindness add value. Smart robots could be doctors, nurses, waiters, personal trainers, hairdressers, pets and companions. If these have hearts, they would deliver benefits to us humans, for which we may be willing to pay.

Such robots may yet replace the family dog; and you wouldn’t even have to take it for a walk twice a day.

Furthermore, human-like roots may ultimately become sought after companions for lonely women and men. Of course, no self-respecting woman (or man) would be seen dead on a date with a robot. She (or he) could not be so desperate. But in the privacy of the home, it may be a different matter.

Then again, a friendly and intelligent slave robot may be in much demand as a status symbol. It could be seen as a fashionable accessory like a Gucci handbag or a Maserati. To play such a role the robot would need to have a heart as well as a mind.

Demand for intelligent and kind robots could spur R & D in that direction. Look how the demand for PC’s, laptops, the internet, iPads, iPhones and social media exploded beyond all expectations of their inventors and founders. All these inventions are partly or wholly within the personal enrichment space — as would be intelligent robots with a heart.

It has long been recognized by pioneers in the field that morality needs to be programmed into AI so that it does not harm humans or humanity. In this respect, Estonia has enacted a “Kratt Law” to regulate robots, the term coming from mythical creatures of Estonian folklore. The kratt was a mythical figure brought to life from hay or household objects. When the owner acquired from the devil a soul for his or her kratt (in modern language read “algorithm”), the kratt began to serve its master.

Along similar lines, the designers of self-driving cars are trying to develop rules for moral dilemmas, such as that posed when a decision has to be made by the car whether to run over a child on the road or swerve and hurtle over a cliff, killing all the adult passengers on board.

But is programmed morality enough to stop robots with free will from running amok? If they are independent thinkers, learning from their environment, they may well reprogram themselves and their offspring with a new set of moral rules which suit their ends and not ours. Morality, conscience and empathy must be hardwired at a deeper level within any robot’s DNA, as they are for your pet poodle. This must be such as to put out of mind any desire by the robot to rewire its own or its offspring’s circuit.

In the meantime, do we trust governments to regulate robot production and to ensure the military and tech industries do not develop killer or amoral robots?

Governments might be induced to place a moratorium on producing robots without feelings, for example. I am all for such regulations, but we have to be realistic about their chances of success.

History is not very encouraging in this respect. Little success has been achieved in stopping other existential threats to humankind, such as nuclear weapons, global warming and gene editing of human germ cells to create designer babies (and inevitably new human or post-human species). Some nations have aligned with such moratoriums and some have not: nine nations still possess nuclear weapons; the USA is pulling out of the Paris Accord on climate change; and China has not banned human gene editing.

It seems that our best chance of creating AI and robots that will not destroy or enslave us is if the personal enrichment sector and the service industries take the lead, and set the tone in AI development. That is our best hope for producing human-friendly robots.

Playful robot puppies and kindly robot companions: human ingenuity may save us yet!