Ethics of Science

Is it possible to remove moral values from science?

Is it possible to remove moral values from science?

You wake up after a long day from your 9–5 job and look out the window. Suddenly, you stare out of your apartment window and see walking computers controlled by Artificial Intelligence, cloned humans, and homicide after homicide.

That was of course just a story, albeit one that many have when they think of separating ethics from science–a dystopian world where power lies in the push of a syringe!

Every day of your life is constantly controlled and micromanaged by cloned humans, you have to take pills to reduce the chances of disease, your name has been eradicated and replaced by a code number. Finally, after shutting the curtains of your apartment window, you realize the indifference that the world feels towards humanity, and how your species isn’t prioritized.

What would happen if we really did separate ethics from science, and what would that mean for future generations? If we blindly develop technology without stopping to think where we’re going, things could go awfully wrong. It may not seem wrong at first, but then, when you look back at the end, you’ll wonder how you didn’t see it coming.

You should have known it would turn up this way. You should have at least suspected it, when they took away your name and replaced it with a number in the name of “efficiency”. But then, how could you have guessed, when everyone around you was saying life was so good?

You were living in the perfect weather–neither too hot, nor too cold, unless of course someone hacks into your Smart Home setup. Outside, the weather was democratically controlled, and while some districts end up with too many desert or tundra enthusiasts, most of them are usually quite pleasant. Your daily box of pills helped keep disease at bay, and though it made you feel woozy, your cloned humans are there to keep things running.

Clones!–the greatest ethical innovation of the day. Everyone has a right to life, liberty, and the pursuit of happiness. But if the original “you” is enjoying all those rights, who cares if subordinate versions are made to work on something useful? Technology wanted to emulate biology, but in the end it worked the other way round.

How far can we let technology go? It’s not an easy question. In fact, there’s a whole field dedicated to it. This questioning process, of exploring the controversies, debates, and questions that we so desperately want to ask scientists, is called bioethics.

The term ‘bioethics’ was first coined in 1970 by a biochemist, Van Rensselaer Potter. But the concept has been around for much longer than that. In fact, the word ‘ethics’ comes from the Greek word ‘ethos’, meaning ‘behaviour’ or ‘custom’. Aristotle, a Greek philosopher, first used it to discuss philosophical questions relating to everyday life. Today, the meaning has shifted to ‘morals’, which is a more personal definition.

And of course, ‘bioethics’ combines the concept of biology with ethics. While biologists spend their time developing technology figuring out the next great cure, bioethicists try to see whether creating such technology is actually such a great idea or not — like is it okay to make clones?

The fact that we’re talking about bioethics separately from biology makes it seem as though we can study it without ethics — which in turn implies that moral values are not a necessity.

Does this mean science has already been separated from ethics? In some ways, one could say yes. You do find people who focus exclusively on research, without spending time worrying about the consequences, and you also find people for whom “worrying about the consequences” is their full job description.

That said, applications of the scientific process should certainly be monitored, supervised, and of course regulated with legislation. That’s precisely how it benefits humanity: by understanding and restricting how we apply the functions of that process, we can apply the advantages of science to humanity while steering clear of the sticky areas.

Have you heard of Dolly the sheep? Here’s her unique story: The cell of an adult sheep was injected into an unfertilised egg, which was then induced to grow into a new sheep — Dolly — who was the exact DNA clone of the original sheep. This is what people imagine when they think of cloning, but cloning could also be growing another copy of your lungs when it needs to be replaced.

Then again, what if you take it to the extreme and make a copy of yourself so as to have all parts of your body readily available whenever needed? From the point of view of that body, they actually are a person, who is unfortunately robbed of organs and other body parts every once in a while!

Regulating science isn’t always easy. The problem is, laws take long to write and even take longer to catch up. Tax laws, for instance, assume that you have a physical setup that’s in a specific location. But when tech companies can move from the Canada tonight to Ireland tomorrow, just because the tax rates are less — well, that’s where the rules start to break down.

With proactive governments, though, that doesn’t always have to be the case.

In Estonian mythology, there is a well known creature called the Kratt — means algorithm — which is made out of household objects. When its master acquires a soul from the devil, the Kratt comes alive, allowing them to command the creature to do whatever they want.

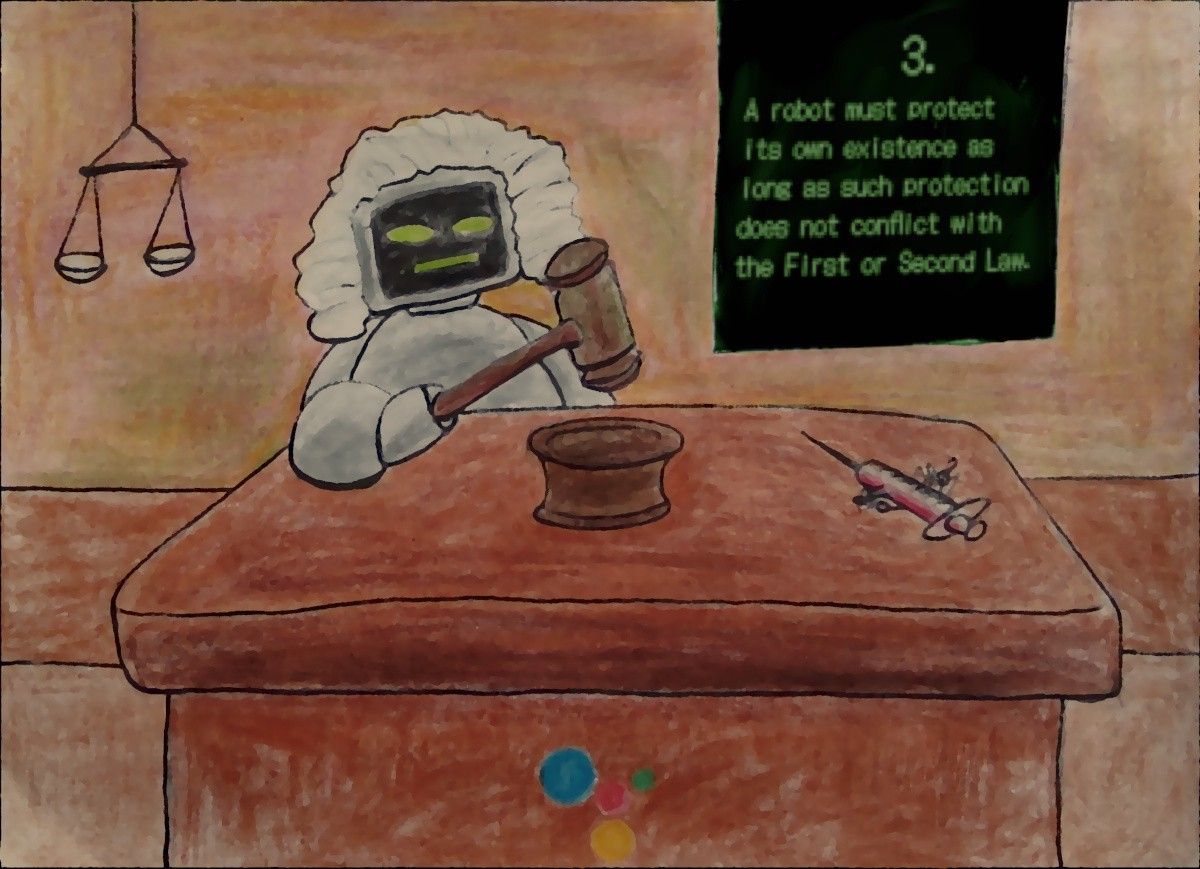

Now, in 2018, the Estonian Government plans to start building next-generation public services based on AI (artificial intelligence). But, who is responsible for when the AI messes up?

That question is precisely the reason the Estonian government put effort into developing a new “Kratt Law”. It gives algorithms a separate legal status, similar to companies, allowing them to be “persons” in their own right.But more importantly though, care has been taken to decide who exactly takes the blame for slip-ups and how they should be compensated.

Aside from giving a relatable name, this shows the government is actively keeping an eye on technology, and making sure it stays up to date. When science happens, the ethics must soon follow. I believe that science should initially be separated from ethics — during the process — but then merged back with ethics for the results and aftermath of that process.

If science is already separate from ethics, then our proof would be living in a world that is completely controlled by robots. If it is separated, then science would keep progressing and developing without any interventions — no more debates and controversial opinions.

How would science benefit humanity if it doesn’t fit into our ethical molds? What sort of a progression would it be, when its intentions and influences on society are more negative than positive? Is a well conducted research without morals, still worth it? Are hypotheses that are prejudiced worth exploring?

These questions aren’t easy to answer, but what we do know, is that we have to keep asking them. Because without them, we might as well live in an apocalyptic world!

Well, before all that we still need to understand - what are our ethics?

We are all living in the middle of a pandemic. But do we agree on all safety protocols? Not really. Some of us choose to stay inside as much as possible, some of us choose to go outdoors a lot. Isn’t that a clash of ethical views? Each of us have our own theories and morals, and there doesn’t seem to be one action or behaviour that we all agree upon.

Nevertheless, we do agree on some things. For example, murder is unacceptable, no matter where in the world you are. All the laws dictate that homicide is a crime, and that the people responsible should be punished — sometimes by death.

That, in essence, is the idea behind ‘moral universalism’.

Moral universalism holds that humans have moral principles that applies to everyone. In other words, it implies there is a foundation of ethics within every individual — they all have some similarities in their thought paradigms — no matter their nationality, sexual orientation, religion, etc.

The United Nations Human Rights declaration contains articles laying down the basic human rights of all of humanity. Even the 30th article says that no state, institution, or individual can violate or infringe these rights.

Even with this declaration, though, societies tend to hold different beliefs.

Looking back at the example of killing, homicide is prohibited for all societies, right? As it turns out, that’s not always the case; something like honour killing — when the victim has brought shame or dishonour upon the family or community — is easily justified. As you can see, not all societies or institutions are on the same moral stance. Ethics tend to be quite subjective: what’s right or wrong depends on the individual.

If these human rights apply to all societies, why are there [130 million girls](https://plancanada.ca/girls-education#:~:text=Girls'-,Education,the%20classroom%20%E2%80%93%20where%20they%20belong.) not in school? If we’re all equal and free, why are there more than 3.4 billion poor people in the world? These are valid and ethical human rights, but they are no more than words on paper if they aren’t established and regulated by the leaders signing them themselves.

Ethics isn’t a “common to all societies” concept. Different societies may have some common aspects, but ultimately, the differences outweigh the similarities.

So how can science be glued to ethics, when it’s inconsistent with the opinions of humans?

If we were to hold onto ethics, science will keep meeting dead-ends, since you can never please everyone with the same foundation of morals.

Think of it like a staircase, the more discoveries, and experiments that science creates, the more it will climb. However, whenever someone comes barging in with an opinion, moral, or an ethical belief, they hit science right in the forehead and cause it to go tumbling down the stairs. Like how stem cell research is frequently halted instead of continuing to progress, because of ethical concern being that the stem cells are derived from human embryos.

On the other hand, once science has climbed high and reached the top, we need ethics to make sure it doesn’t fall of the edge.

For the most part, when the procedures involved in science ends, it usually tends to be a result of ethical concerns. But what’s wrong with that — don’t we need to make sure that it benefits us first? Not every scientist has humanity’s best interest at heart — and even if they did, things can often go wrong. So wouldn’t we rather be safe than sorry?

This whole debate revolving around science and ethics really uncovers the true intentions in their practices, since it leaves us wondering about the aftermath of an unethical science domain.

What then becomes the point of the research and development if it’s not benefitting humanity? Mere curiosity? Boredom?

Let’s face it, since Homo sapiens (humans) are the most intellectual, innovative, and adaptive species, it’s fair to say that we have some sense of superiority. Though it sounds very snobby and pretentious to say that we’re superior to other species, the raw truth is that we’ll pick humans over koalas.

There are many elements of life that are normalised when they happen to a specific species but would otherwise be seen as ludicrous for humans. For example; we’re fine with using animal cells in vaccines but would become shocked at the thought of using our own cells!

Because we regard humans as superior to other species, we decide that everything needs to benefit us.

Having ethics constantly tug at science at every stage of development doesn’t let new ideas develop in the first place. We’ll never make any progress towards bettering the world without first letting go of ethics.

Then again, that defies the basic purpose of science: to help humanity. We need ethics to stay with science, or else our global objective is lost.

So do we need to separate ethics from science? The answer is not a clear ‘yes’ or ‘no’, but something in between. We have to decide what times to apply ethics, and what times to leave it in the back seat while science does its thing. As for how we decide what to do when…well, that’s where ethics comes in.

What gets you most is that it all started with good intentions. Nobody wanted to create a killer A.I.; they just wanted to create a cure for cancer. Breathlessly, enthusiastically, and brushing aside concerns about “too powerful” and “will it listen”, they put their hearts and souls into crafting the best algorithm ever.

They gave it the one and only command: “Eradicate cancer from the planet”.

And so the A.I. thought and meditated and calculated, and came up with a solution. By getting rid of humans, who get cancer, it would eradicate cancer as well.

And now, slowly and methodically, it’s doing it’s job–in the best and most efficient way it knows.

You open your curtains one more time, and realize that it’s just another sunny day.

Think different: These are just my thoughts and opinions, and as such they’re loosely held. Feel free to propose arguments or change my mind in the comments!

Connect with me

- Email: kawtar.karmouni13@gmail.com

- Newsletter